FAQ

Frequently asked questions

List of questions frequently asked by our users.

Kafka Magic displays Key as a byte array. How can I find my message using a string Key?

If you know that the message Key is a string, you can convert Context.Key from bytes to string with this simple line of code:

let key = String.fromCharCode.apply(String, Context.Key).replace(/\0/g, '');If a Kafka topic contains messages with different schemas, how can I filter messages using Schema Id?

SchemaId is a new field in the Context object. It can be used in a Kafka Magic query the same way as any other message or metadata field. Here is the example of a query selecting only messages serialized with Schema Id = 5:

return Context.SchemaId === 5;How to run complex transformation for large number of messages in a Kafka topic?

Kafka Magic allows creation of complex transformation scripts, containing filtering, transformation, publishing, and many operations on the cluster. Just navigate to the “Automate Tasks” page and start working on your script. Kafka Magic editor helps you with Intellisense suggestions and JavaScript validation.

Here is an example of an automation script:

let sourceTopic = Magic.getTopic('local', 'telecom_italia_data');

let targetTopicName = 'test-target-' + new Date().getTime();

let targetTopic = Magic.createTopic('local', targetTopicName);

let pubOptions = new KafkaMagic.PublishingOptions();

pubOptions.SchemaSubject = 'telecom_italia_data-value';

// process messages coming from the source topic

sourceTopic.process(true, 100, context => {

context.Message.CountryCode = 1;

targetTopic.publishMessage(context.Message, true, pubOptions);

Magic.reportProgress(context.Message);

});

// validate messages in the target topic

let results = targetTopic.search(true, 100, context => { return true; });

Magic.reportProgress(`Found messages in target topic: ${results.length}`);

Magic.reportProgress(results);This script reads up to 100 messages from the “telecom_italia_data” topic, for each message sets the field “CountryCode” to 1, and publishes messages to the target topic.

When finished publishing, the script reads messages back from the target topic to validate the operation.

How to count or aggregate data from all messages in a topic?

If you need to calculate statistics (counters, aggregators) for some or all messages in a Kafka topic, you can use the scripting functionality of Kafka Magic - the “Automate Tasks” page.

Here is an example of such script:

let topic = Magic.getTopic('local', 'telecom_italia_data');

let stats = {

"SquareIdCount": 0,

"TimeIntervalCount": 0,

"CountryCodeCount": 0,

"SmsInActivityCount": 0,

"SmsOutActivityCount": 0,

"CallInActivityCount": 0,

"CallOutActivityCount": 0,

"InternetTrafficActivityCount": 0

};

let counter = 0;

Magic.reportProgress("Started");

topic.process(true, 1000000, c => {

if (c.Message.SquareId != null) stats.SquareIdCount++;

if (c.Message.TimeInterval != null) stats.TimeIntervalCount++;

if (c.Message.CountryCode != null) stats.CountryCodeCount++;

if (c.Message.SmsInActivity != null) stats.SmsInActivityCount++;

if (c.Message.SmsOutActivity != null) stats.SmsOutActivityCount++;

if (c.Message.CallInActivity != null) stats.CallInActivityCount++;

if (c.Message.CallOutActivity != null) stats.CallOutActivityCount++;

if (c.Message.InternetTrafficActivity != null) stats.InternetTrafficActivityCount++;

counter++;

if (counter % 10000 == 0) Magic.reportProgress(counter);

});

Magic.reportProgress(stats);The script declares aggregating object, which will hold counters for non-null values of message fields. In the topic.process function the limit on total messages number is set to 1,000,000, the script will stop after reaching this number of messages or at the end of the topic.

Every 10,000 messages the script displays current counter, reporting the progress. When finished, the script displays the collected statistics.

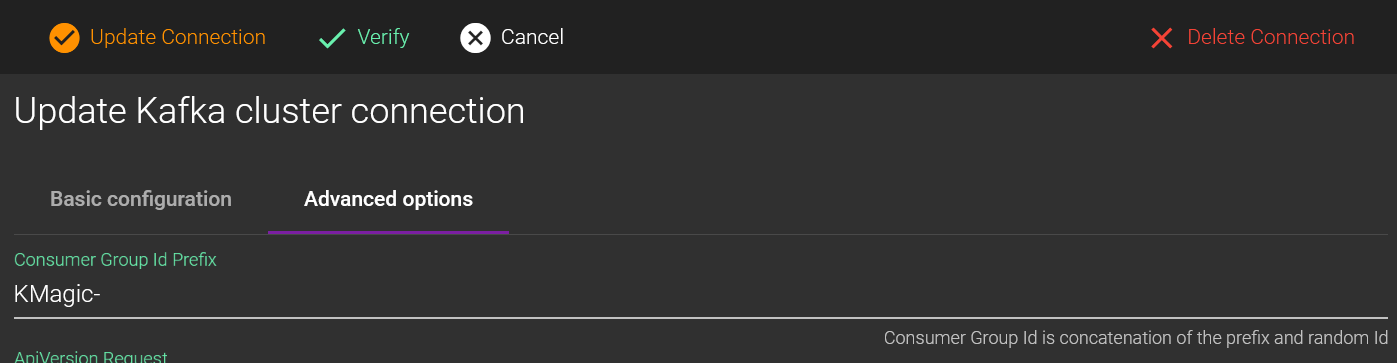

How to authenticate Kafka client by Consumer Group Id?

When Kafka broker is configured for authenticated access, you can setup ACL to consider Consumer Group prefix when authorizing client apps.

By default, Kafka Magic generates Consumer Group Id as KMagic-{random id}, so you can use the prefix KMagic for your ACL. It is highly recommended to change the default prefix to enhance your security. You can do that in the Kafka Magic Cluster Configuration page.

How to change Kafka Magic port number for desktop app?

Behind the scenes, Kafka Magic uses standard Kestrel web server, so most of the configuration options apply (for example, see here).

You can change the port number via Environment variable setx ASPNETCORE_URLS "http://localhost:5432" , or in the appsettings.json file:

{

"Kestrel": {

"Endpoints": {

"Http": {

"Url": "http://localhost:5432"

}

}

}

}

How to persist cluster configuration across container updates?

By default Docker Container version of the Kafka Magic app is configured to store configuration in-memory.

To configure file storage you can update configuration through the Environment variables. Names of the configuration environment variables use KMAGIC_ prefix, so you will need to create these variables:

KMAGIC_CONFIG_STORE_TYPE: "file"

KMAGIC_CONFIG_STORE_CONNECTION: "Data Source=PATH_TO_THE_CONFIG_FILE;"

KMAGIC_CONFIG_ENCRYPTION_KEY: "ENTER_YOUR_KEY_HERE"

You can specify configuration parameters in a docker-compose.yml file.

version: '3'

services:

magic:

image: "digitsy/kafka-magic"

ports:

- "8080:80"

volumes:

- .:/config

environment:

KMAGIC_CONFIG_STORE_TYPE: "file"

KMAGIC_CONFIG_STORE_CONNECTION: "Data Source=/config/KafkaMagicConfig.db;"

KMAGIC_CONFIG_ENCRYPTION_KEY: "ENTER_YOUR_KEY_HERE"

How to preserve cluster connection settings during upgrade to new version?

To keep Kafka Magic cluster connection settings, you need to preserve the configuration file KafkaMagicConfig.db. Note, that the file name can be changed with environment variables (see above).

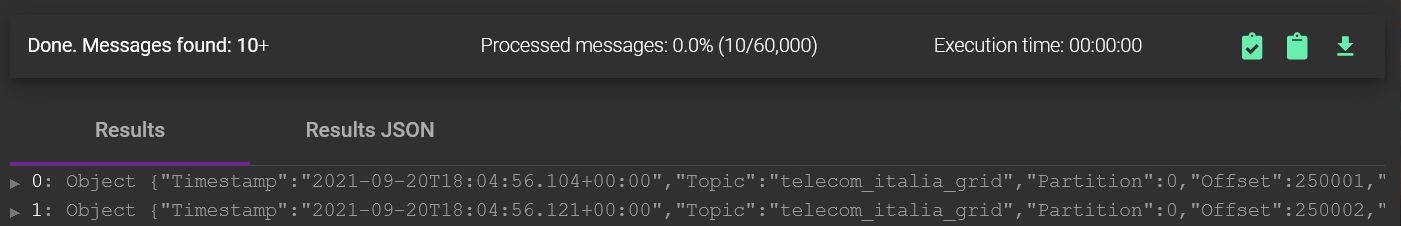

How to save data and scripts into a file

On most pages you can see green icons, clicking on which you’ll be able to save data to the clipboard or a file.